We often need to know if one campaign (A) is better than another one (B). For example,

- Is it better to include the recipient’s name in the subject line?

- Is it better to have a fancy design (vs a plain design)?

- Will including a coupon in the email help?

- Should we send out emails on Thursdays (vs on Monday)?

All of the above questions need real experiments to verify. However, after we run the experiment, how can we make conclusions?

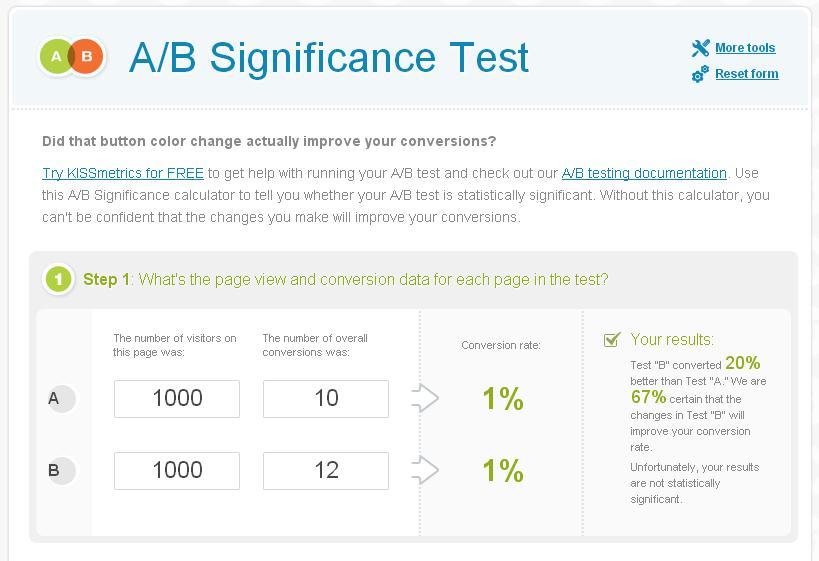

Let’s consider the following fake example: we want to know if including a recipient’s name will help. In campaign A our subject line is “Have you tried enzyme XYZ?” and in campaign B the subject is “Dr Smith, have you tried enzyme XYZ?”. Assume we had sent out 1000 emails and got 10 clicks for campaign A, and sent another 1000 emails and got 12 clicks for B. Isn’t obvious that B is better than A?

The answer is no. It is possible that the 2 more clicks in B is simply due to chance. Actually we can do a statistical test to see how sure we are to state that B is better than A. In the following website, we entered our campaign result, and you can see we are only 67% sure that B is better than A, not significant.

What to do if we get insignificant result? It either means A and B are really not different, or the number of emails we sent is too small. 1000 is a reasonable number, but to make sure, try increasing the number of emails to 5000 or 10,000 and see if it makes a difference.

Try the tool yourself! http://kiss.ly/p3HUAS

Lesson Learned:

- We need to do A/B testing often to see which campaign is better.

- We need to use statistical tool to test the significance of difference.